Lenovo PGX: A Powerful DGX Spark Alternative for Enterprise AI Workloads

Explore Lenovo PGX — a powerful DGX Spark alternative offering flexible local storage, enterprise-grade performance, and Lenovo Premier Support.

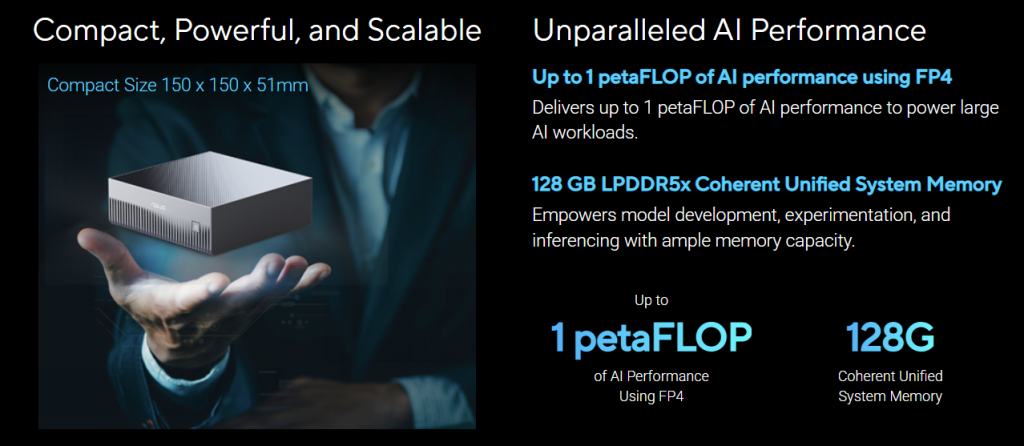

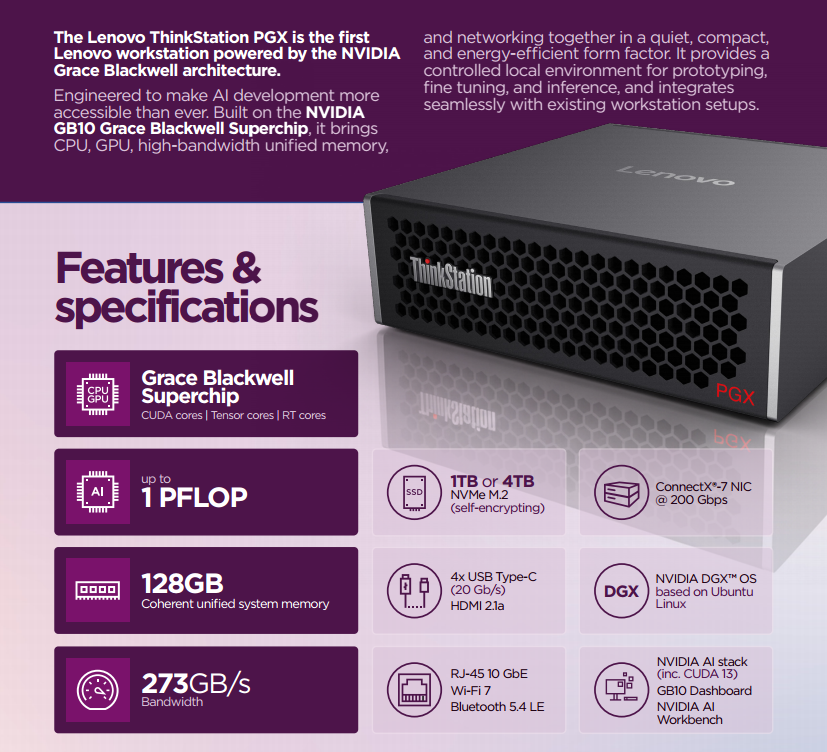

Designed for Sustained AI Workloads in Real Workspaces

Unlike compact accelerator-style systems primarily optimised for compute density, Lenovo PGX is engineered for sustained, professional deployment.

It offers:

- Balanced thermal design for extended GPU utilisation

- Acoustic optimisation suitable for office environments

- Stable power delivery for long-running AI training jobs

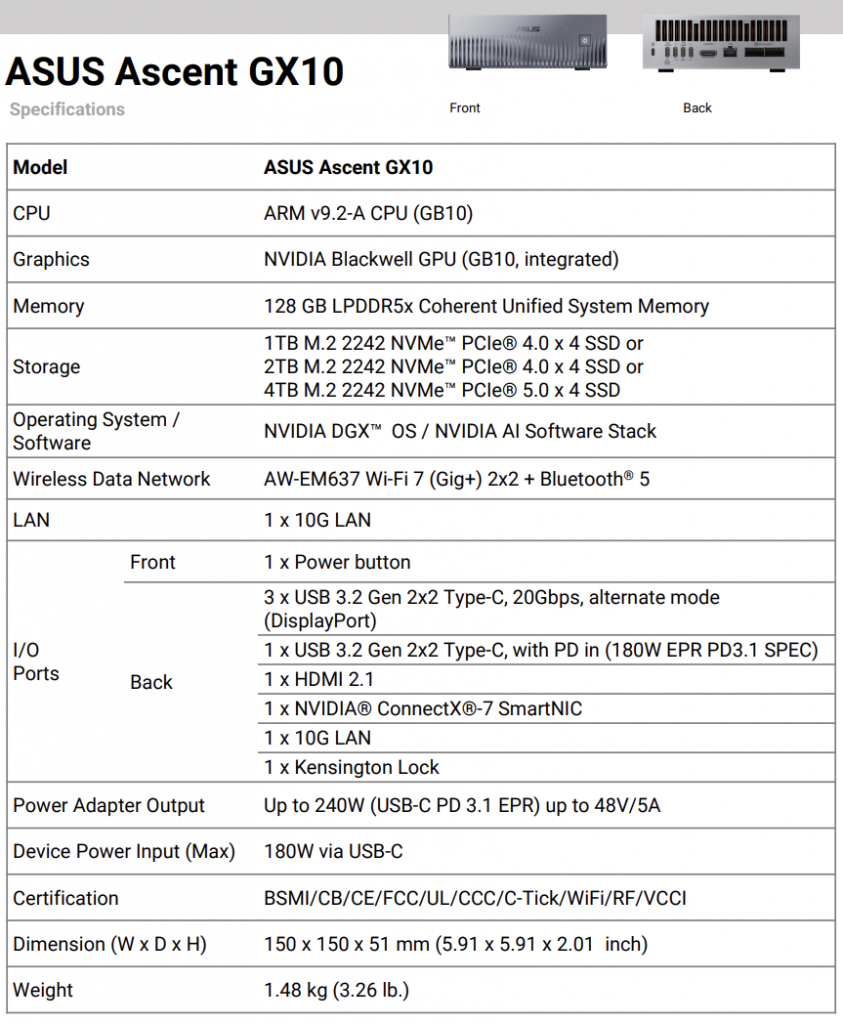

Key Advantage #1: Keep Your Drive Options

Lenovo PGX supports:

- Customisable local drive configurations

- High-speed storage for training datasets

- Flexible data architecture for iterative development

For AI teams in Singapore working with sensitive or high-volume data, maintaining local control can significantly improve workflow efficiency.

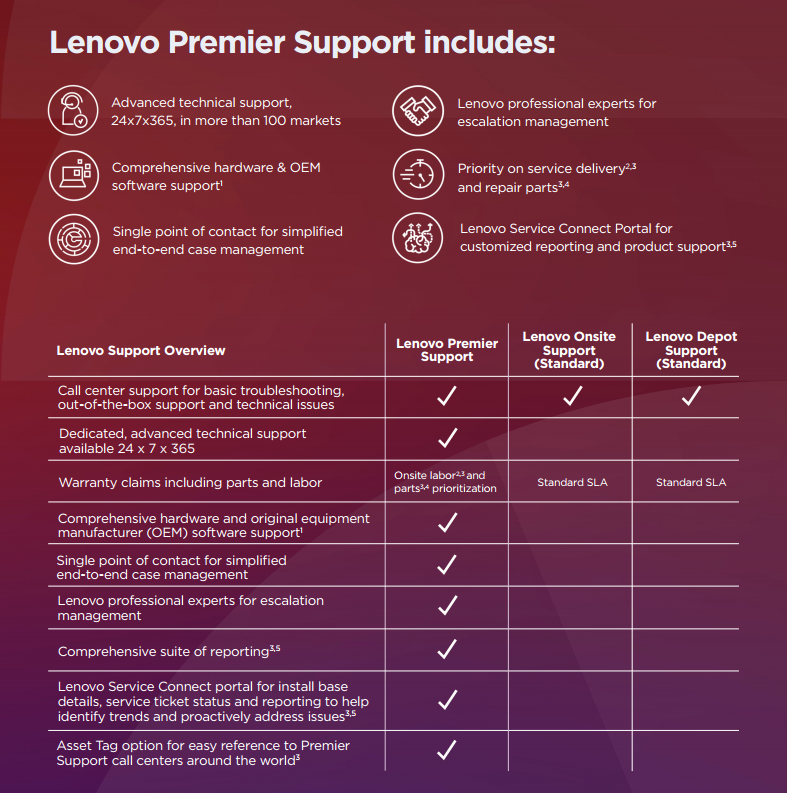

Key Advantage #2: Strong Local Premier Support in Singapore

For enterprises running production AI workloads, reliable local support reduces operational risk and downtime exposure.

Contact us to discuss how Lenovo PGX can support your AI, data science, and high-performance computing initiatives in Singapore.